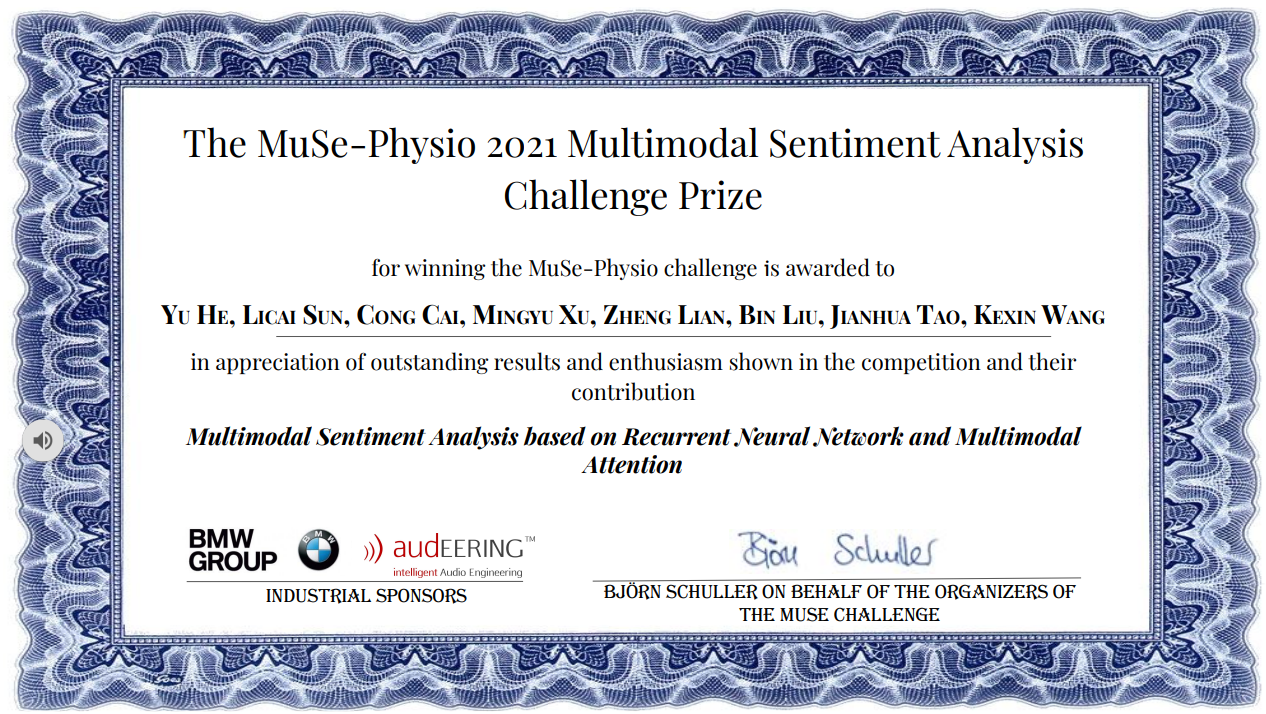

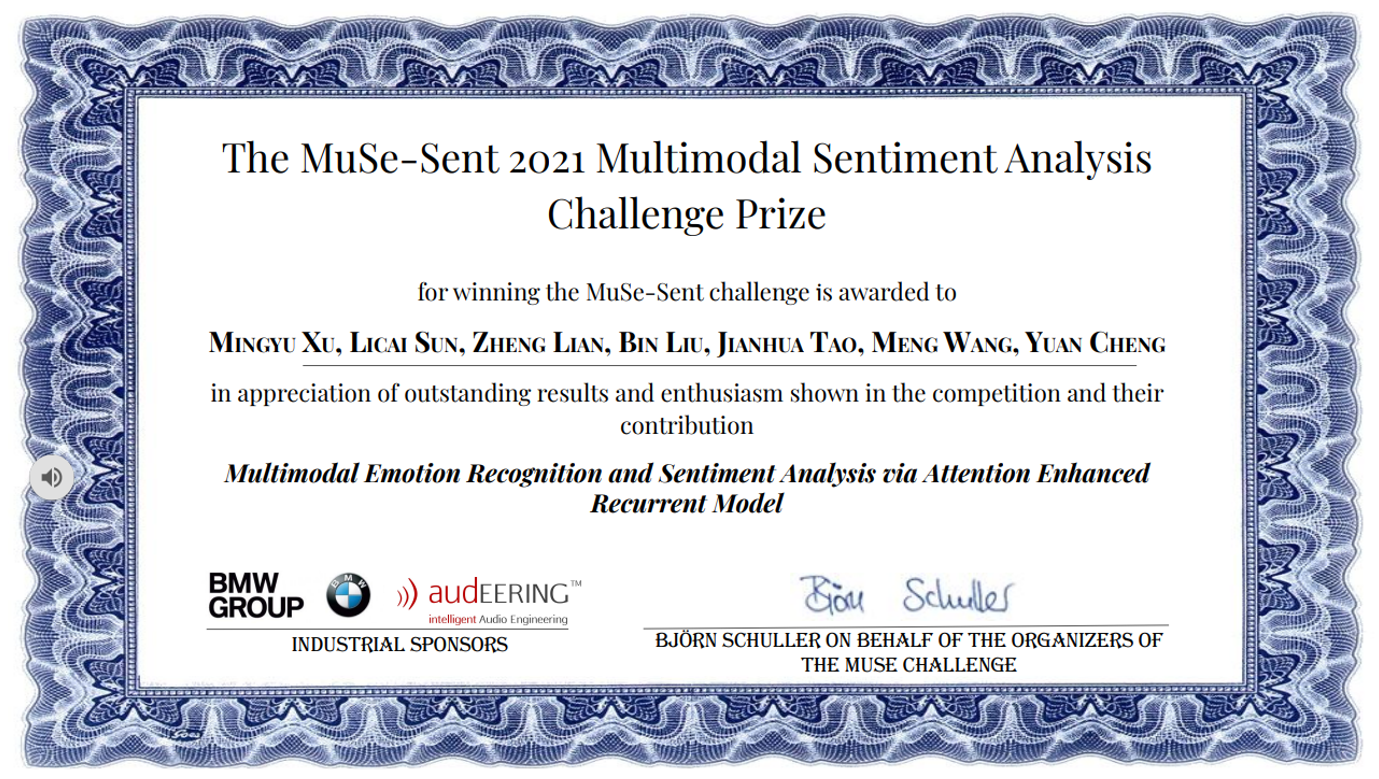

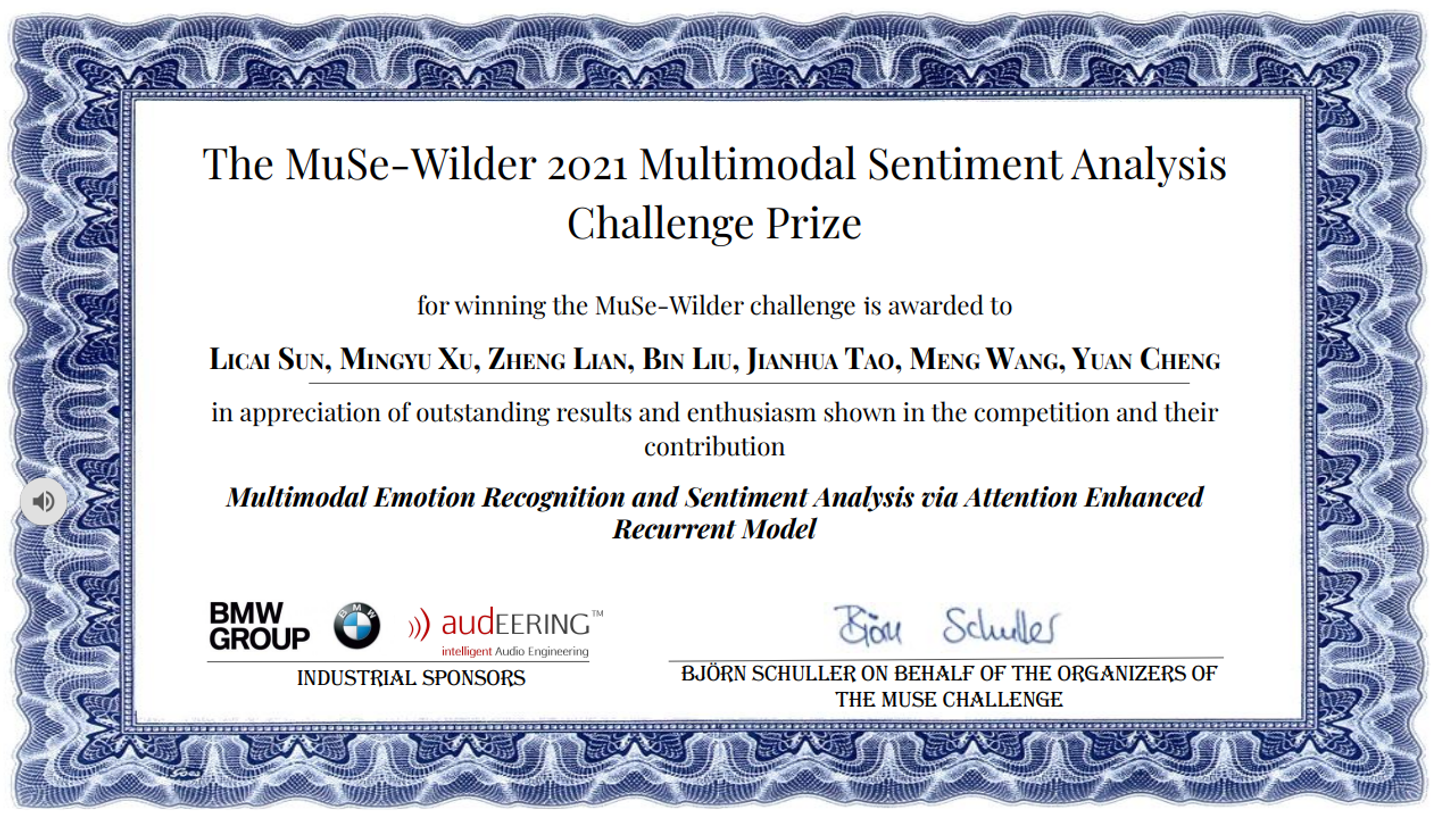

The results of the Multimodal Sentiment in Real-life Media Challenge 2021 (MuSe 2021) were officially announced at the ACM Multimedia 2021, recently. The intelligent interaction team from the National Key Laboratory of Pattern Recognition of CASIA championed three challenges, including multimodal continuous emotion recognition (MuSe-Wilder), multimodal discrete emotion recognition (MuSe-Sent) and multimodal physiological arousal analysis (MuSe-Physio).

This year’s challenge focuses on the subtle emotion analysis of multimodal high robust in natural scenes, pays more attention to the evaluation of multimodal deep fusion ability combined with semantic information, and requires participants to effectively predict the emotional state by using provided audio, video and text data in natural scenes. In the three competition tasks, our team used cyclic neural network and self-attention mechanism for time series modeling, combined with multiple fusion strategies such as multimodal attention mechanism to extract cross-modal information, and effectively solved the problems of small samples and robustness with the help of data expansion and consistent correlation coefficient loss function. A series of work is of great significance to the development of multimodal emotion analysis technology.

MuSe 2021 is a special session in the ACM Multimedia 2021, which includes four tasks. It was jointly organized by Imperial College London (UK), Ausburg University (Germany) and Nanyang Technological University (Singapore). The competition attracted 61 teams worldwide. It was originated from the famous Avec (Audio-Visual Emotion Challenge) competition in the field of emotional computing.