Since it is difficult to collect face images of the same subject over a long range of age span, most existing face aging methods resort to unpaired datasets to learn age mappings. However, the matching ambiguity between young and aged face images inherent to unpaired training data may lead to unnatural changes of facial attributes during the aging process, which could not be solved by only enforcing identity consistency like most existing studies do. This paper proposes an attribute-aware face aging model with wavelet based Generative Adversarial Networks (GANs) to address the above issues. To be specific, it embeds facial attribute vectors into both the generator and discriminator of the model to encourage each synthesized elderly face image to be faithful to the attribute of its corresponding input. In addition, a wavelet packet transform (WPT) module is incorporated to improve the visual fidelity of generated images by capturing age-related texture details at multiple scales in the frequency space. Qualitative results demonstrate the ability of the model in synthesizing visually plausible face images, and extensive quantitative evaluation results show that the proposed method achieves state-of-the-art performance on existing datasets.

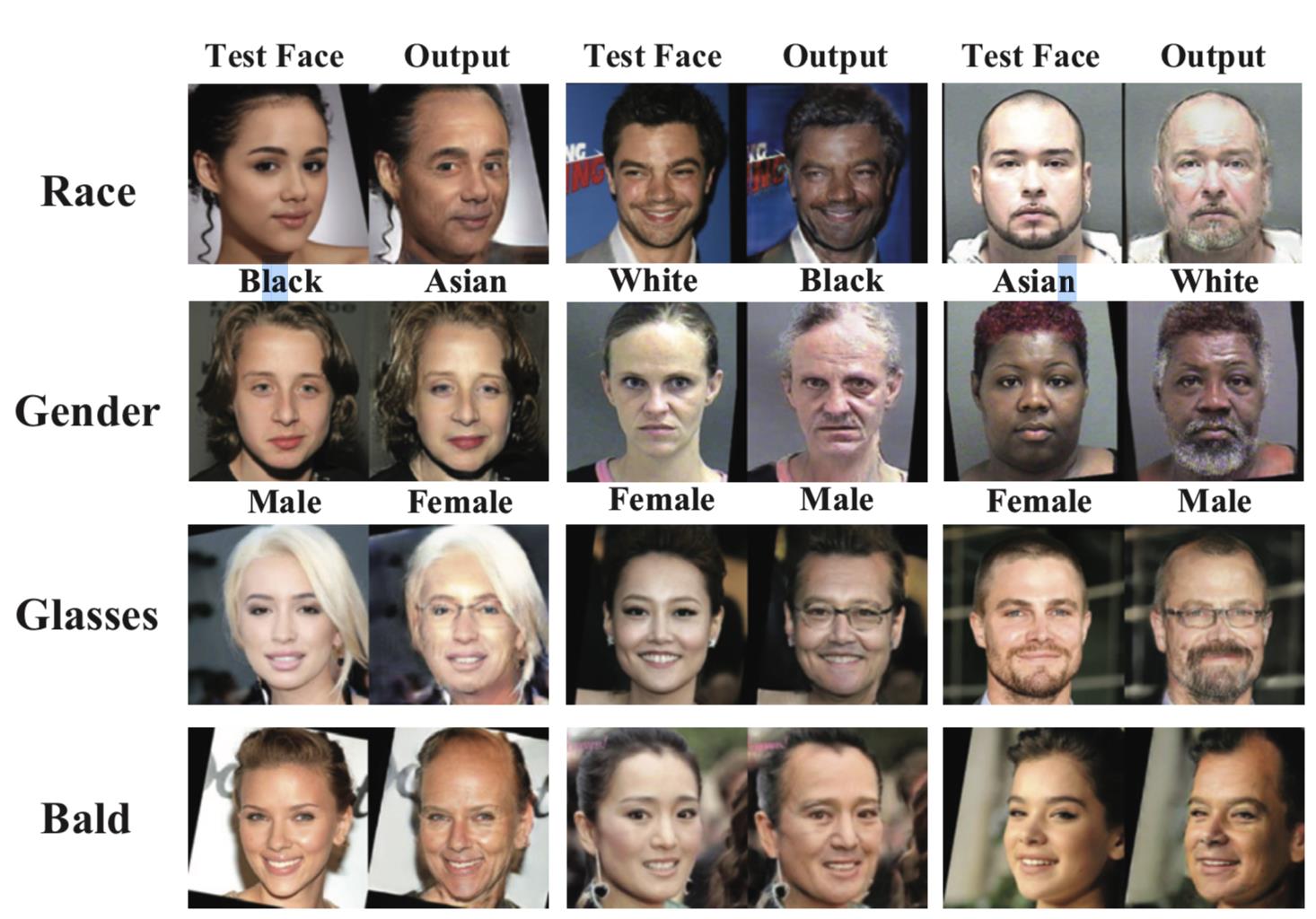

Face Aging aims at rendering a given face image with aging effects while still preserving personalized features, which can be used to recognize face images over a long range of age span. Since it is extremely expensive to collect a large amount of paired data (i.e. multiple face images of the same person at different ages), most of the present algorithms usually adopt unpaired data to train the non-cyclic GAN. In this case, for each input face image, there will be no exact aged counterpart in the dataset. Consequently, training face image pairs with mismatched attributes would mislead the model into learning translations other than aging, causing serious ghosting artifacts and even incorrect facial attributes in generation results. Figure 1 shows some face aging results with mismatched attributes.

Examples of face aging with mismatched facial attributes

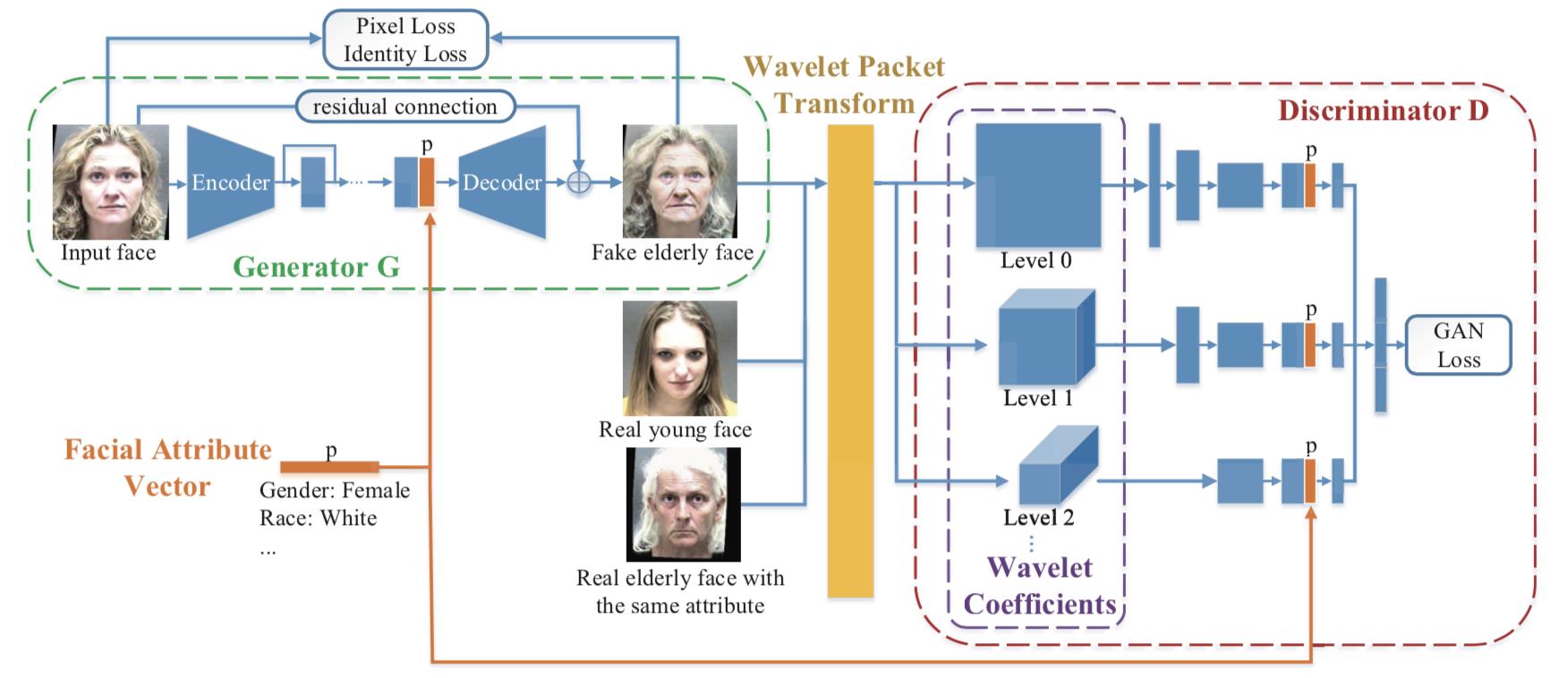

Zhenan Sun, Qi Li and Yunfan Liu, scholars from the Center for Research on Intelligent Perception and Computing, CASIA, propose a GAN-based framework to synthesize aged face images. They embed facial attribute vectors to both the generator and discriminator to encourage generated images to be faithful to facial attributes of the corresponding input image. In an unpaired face aging dataset, each young face image might map to many elderly face candidates during the training process, and image pairs with mismatched semantic information may mislead the model into learning translations other than aging. To solve this problem, the proposed framework takes young face images and their semantic information (i.e. facial attributes) as input and outputs visually plausible aged faces accordingly. The generator network embeds facial attributes into young face images and synthesizes aged faces. The discriminator network is used to encourage the generation results to be indistinguishable from generic ones and to possess attributes same as the corresponding input. An overview of the proposed framework is presented in Figure 2.

An overview of the proposed face aging framework

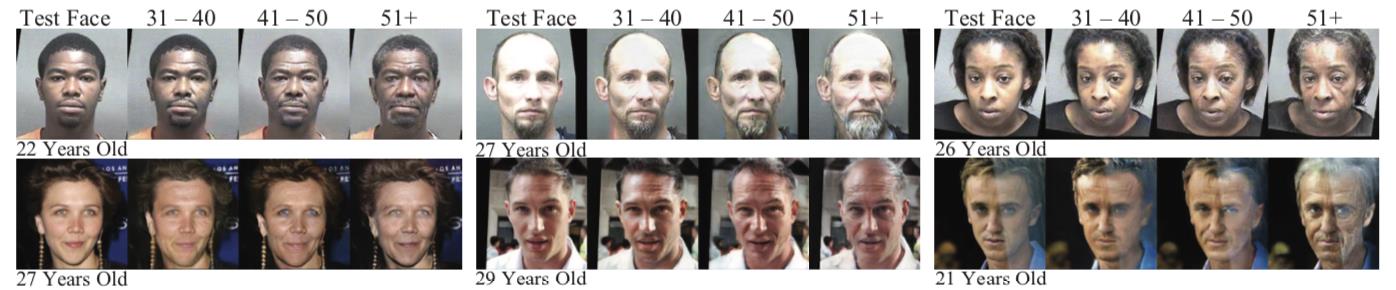

Extensive experiments are conducted on Morph and CACD2000, and qualitative results demonstrate that the method could synthesize lifelike face images robust to variations, and also validate the effectiveness of the proposed method in aging accuracy as well as identity and attribute preservation.

Sample results on Morph and CACD2000.

Full Text:

Attribute-aware Face Aging with Wavelet-based Generative Adversarial Networks

Contact:

ZHANG Xiaohan, PIO, Institute of Automation, Chinese Academy of Sciences

Email: xiaohan.zhang@ia.ac.cn